Today (9/30) is my birthday. I thought I would break down the various birthday wishes and make some graphs, cause I love me some graphs.

Some numbers

Some numbers

- Total of 41 Happy Birthdays (HBDs) received

- Earliest Bday wish received 9/29/2014 @ 8:12AM by my friend Wendy. Classic Wendy wishing me a happy bday on the wrong day. Better way early than never.

- HBDs started at 5:30AM on 9/30. First HBD received on Facebook.

- Royals playing first Postseason game in 29 years tonight. What a game...just had to mention that.

Hour of Day Break down

- Huge spike in HBDs during the 11:00AM hour.

- People eating dinner around 5:00PM this evening, no HBDs received.

Who Wished

Most HBDs were received by Facebook Friends denoted by FFriends. Facebook Friends are those folks that you only interact with via Facebook. These could be friends from days past, acquaintances, or just straight up Facebook Friends.

Friends here are people I consider closer than FFriends. These are friends that I maintain close contact with, the type of people that would help you hide the body if needed. Of course there is only one wife in this category.

- Most common family member wishing HBDs was cousin with 3 HBDs wished.

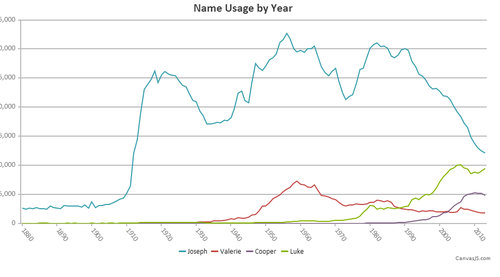

- Most popular name Matt with 3

- Names beginning with M come in first with 6 HBDs

Method Received

- 72% of all HBDs were received via Facebook. Friends and FFriends alike use the common social media platform.

- My parents used the good ol fashioned phone method. It was good to talk to them, they are out of town traveling around.

- Coop and Val wished me a happy birthday with their voice.

- I received 5 cards in the mail